[SIGGRAPHAsia16Workshop] A Virtual Reality Platform for Dynamic Human-Scene Interaction

Abstract

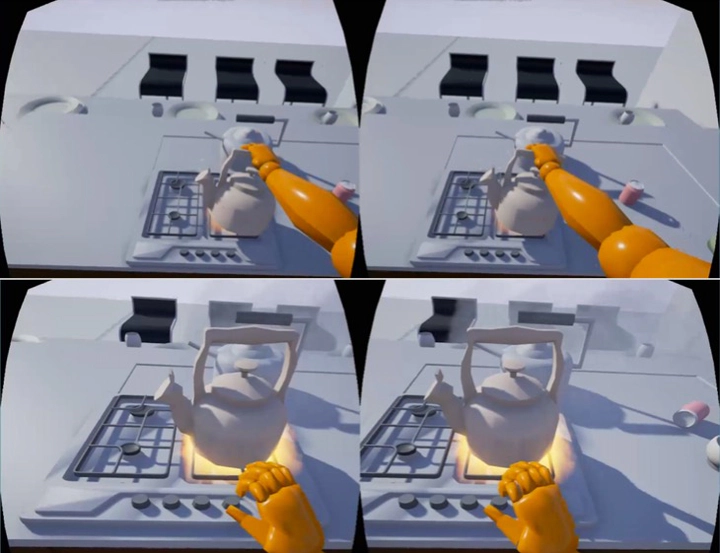

Both synthetic static and simulated dynamic 3D scene data is highly useful in the fields of computer vision and robot task planning. Yet their virtual nature makes it difficult for real agents to interact with such data in an intuitive way. Thus currently available datasets are either static or greatly simplified in terms of interactions and dynamics. In this paper, we propose a system in which Virtual Reality and human / finger pose tracking is integrated to allow agents to interact with virtual environments in real time. Segmented object and scene data is used to construct a scene within Unreal Engine 4, a physics-based game engine. We then use the Oculus Rift headset with a Kinect sensor, Leap Motion controller and a dance pad to navigate and manipulate objects inside synthetic scenes in real time. We demonstrate how our system can be used to construct a multi-jointed agent representation as well as fine-grained finger pose. In the end, we propose how our system can be used for robot task planning and image semantic segmentation.