Abstract

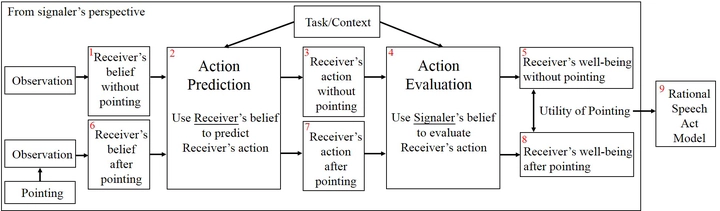

Although pointing is sparse, overloaded, and indirect, it allows humans to effectively decode shared information, (ex)change their minds, and plan accordingly. Pointing is an invitation to jointly attend to an object, which triggers the mutual inference between agents of each other’s mind. Relevance is a fundamental assumption underlying all human communication, including pointing. We define relevance as how much a signaler’s belief can make a positive difference to its receiver’s well being. We build a Theory of Mind (ToM) model to test our definition of relevance and use pointing as a case study. In two experiments, we test our relevance model in a classic artificial intelligence (AI) task, the Wumpus world, with the key difference that there is a guide that points to help a hunter. Agents with our relevance model gain significantly higher rewards than agents who ignore signals from the guide. Agents with our model also achieve better performance than agents who receive an additional observation of the environment. The results show that the power of pointing comes from the ToM inference of relevance, rather than providing more precise individual perception.