[IROS20] Human-Robot Interaction in a Shared Augmented Reality Workspace

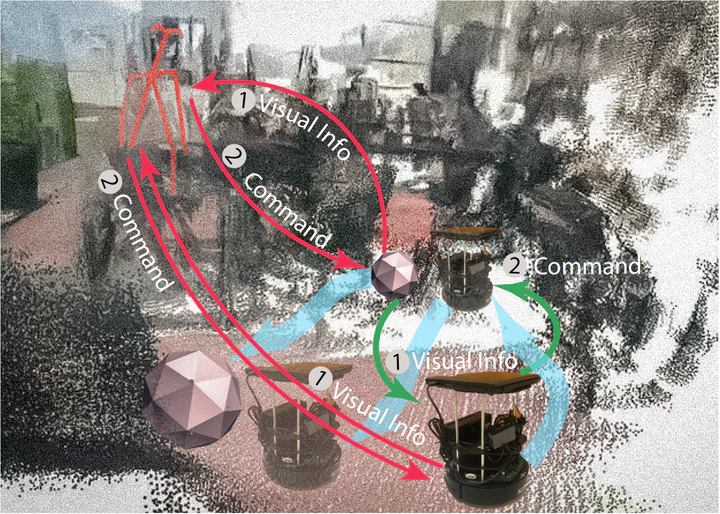

A comparison between the existing AR systems and the proposed shared AR workspace. Existing AR systems limits to an active human, passive robot, one-way communication, wherein a physical robot would only react to human commands via AR devices without taking its own initiatives; see the red arrows. The proposed shared AR workspace constructs an active human, active robot, bi-directional communication channel that allows a robot to perceive and proactively manipulate holograms as human agents do; see green arrows. By offering shared perception and manipulation, the proposed shared AR workspace affords more seamless HRI.

A comparison between the existing AR systems and the proposed shared AR workspace. Existing AR systems limits to an active human, passive robot, one-way communication, wherein a physical robot would only react to human commands via AR devices without taking its own initiatives; see the red arrows. The proposed shared AR workspace constructs an active human, active robot, bi-directional communication channel that allows a robot to perceive and proactively manipulate holograms as human agents do; see green arrows. By offering shared perception and manipulation, the proposed shared AR workspace affords more seamless HRI.Abstract

We design and develop a new shared Augmented Reality (AR) workspace for Human-Robot Interaction (HRI), which establishes a bi-directional communication between human agents and robots. In a prototype system, the shared AR workspace enables a shared perception, so that a physical robot not only perceives the virtual elements in its own view but also infers the utility of the human agent—the cost needed to perceive and interact in AR—by sensing the human agent’s gaze and pose. Such a new HRI design also affords a shared manipulation, wherein the physical robot can control and alter virtual objects in AR as an active agent; crucially, a robot can proactively interact with human agents, instead of purely passively executing received commands. In experiments, we design a resource collection game that qualitatively demonstrates how a robot perceives, processes, and manipulates in AR and quantitatively evaluates the efficacy of HRI using the shared AR workspace. We further discuss how the system can potentially benefit future HRI studies that are otherwise challenging.